IMPASTO: Multiplexed cyclic imaging without signal removal via self-supervised neural unmixing

Published in BioRxiv, 2022

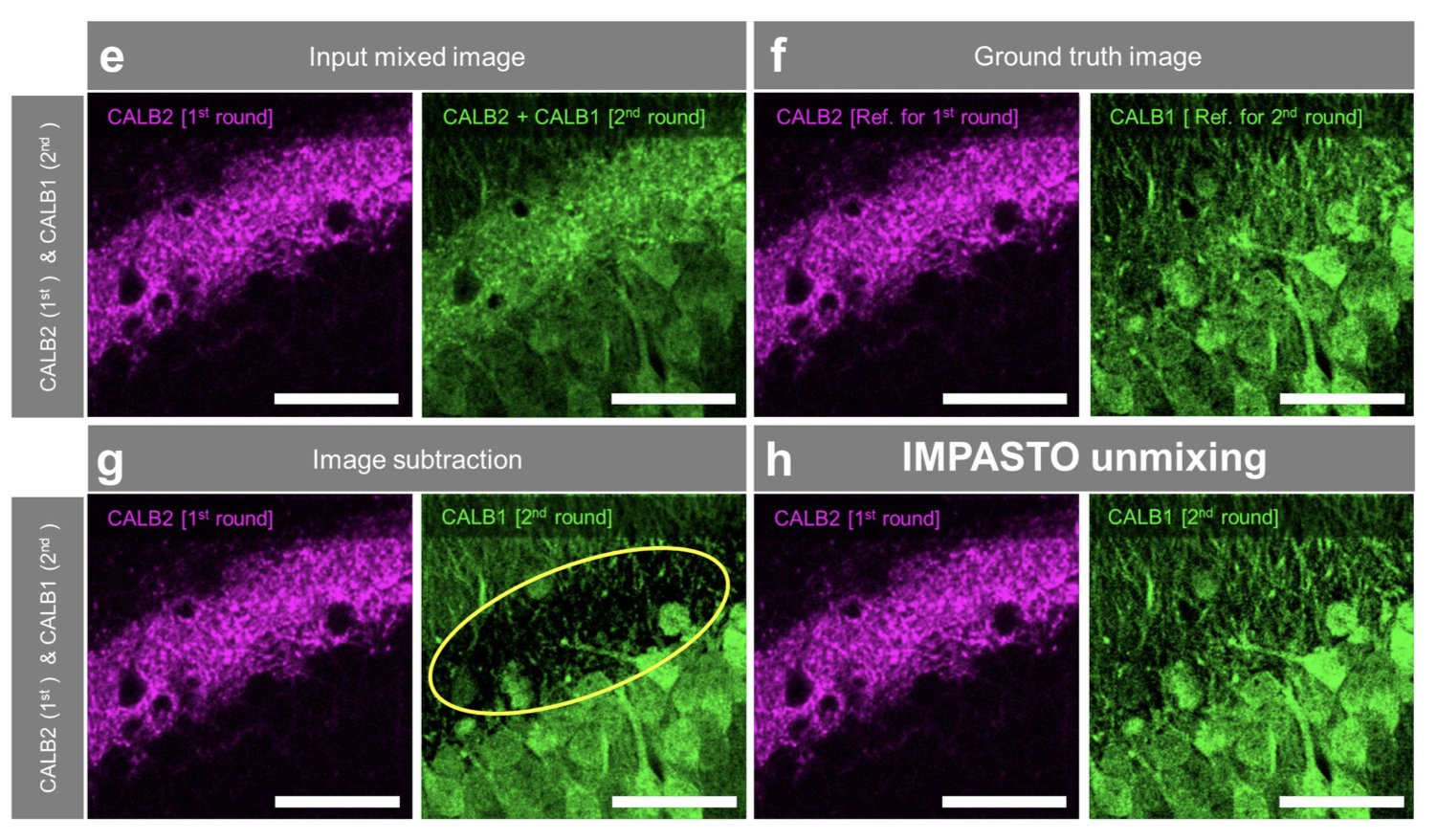

Conventional multiplexed cyclic imaging techniques have limitations, as the signal removal process can alter tissue integrity. This paper introduces IMPASTO, a novel method that iterates imaging cycles without signal removal and uses a self-supervised AI model to unmix the signals, isolating individual protein images. This technique enables high-dimensional imaging while minimizing tissue damage.

Conventional multiplexed cyclic imaging techniques have limitations, as the signal removal process can alter tissue integrity. This paper introduces IMPASTO, a novel method that iterates imaging cycles without signal removal and uses a self-supervised AI model to unmix the signals, isolating individual protein images. This technique enables high-dimensional imaging while minimizing tissue damage.

Recommended citation: H. Kim, S. Bae, J. Cho, H. Nam, J. Seo, S. Han, Euiin Yi, E. Kim, Y-G. Yoont, and J-B. Chang. (2022). "IMPASTO: Multiplexed cyclic imaging without signal removal via self-supervised neural unmixing." BioRxiv preprint.

Download Paper

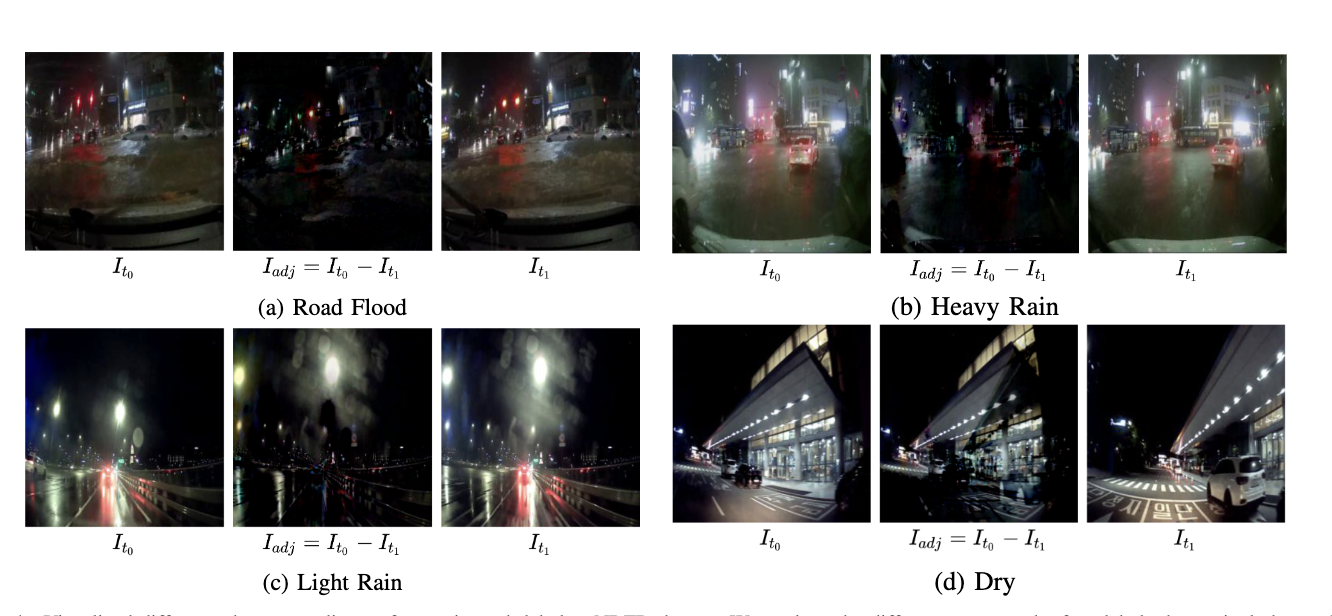

This research focuses on detecting road floods in nighttime driving videos. The study proposes a deep learning model that learns spatiotemporal representations from vehicle black-box footage to effectively detect flooded road conditions, even in low-light and poor visibility environments. The work contributes to enhancing the safety of intelligent transportation systems.

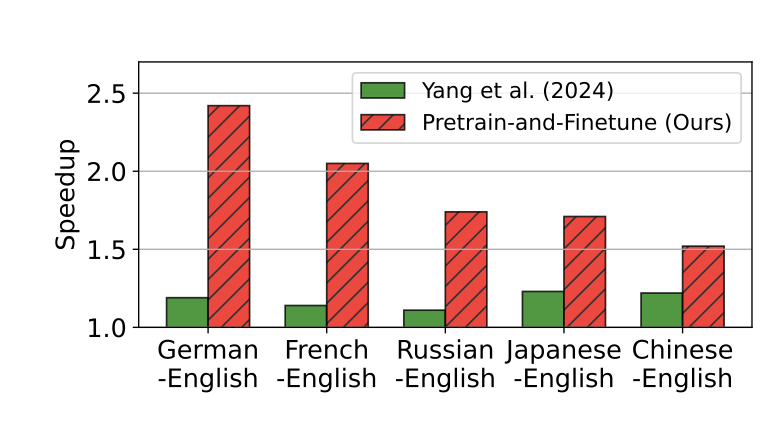

This research focuses on detecting road floods in nighttime driving videos. The study proposes a deep learning model that learns spatiotemporal representations from vehicle black-box footage to effectively detect flooded road conditions, even in low-light and poor visibility environments. The work contributes to enhancing the safety of intelligent transportation systems. This paper addresses the high inference cost of deploying LLMs in diverse language environments. It proposes a speculative decoding method using small, language-specialized drafter models. By employing language-specific drafters optimized through pre-training and fine-tuning, this approach demonstrates a significant improvement in LLM inference speed in multilingual contexts compared to existing methods.

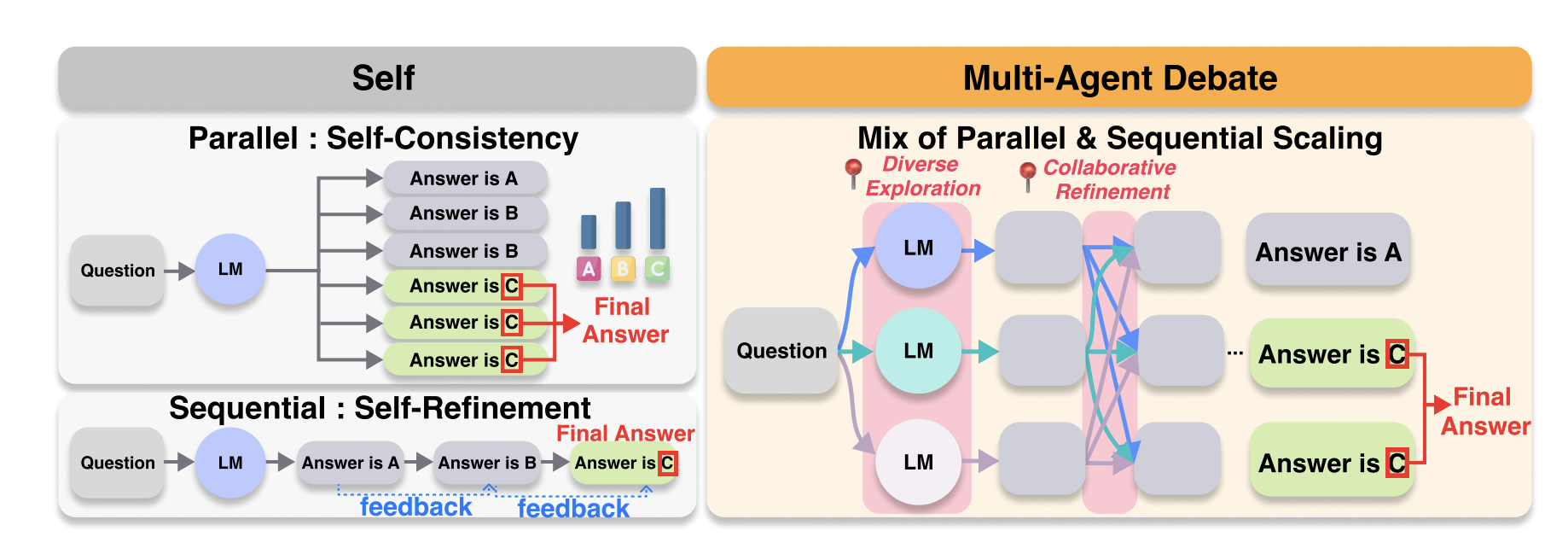

This paper addresses the high inference cost of deploying LLMs in diverse language environments. It proposes a speculative decoding method using small, language-specialized drafter models. By employing language-specific drafters optimized through pre-training and fine-tuning, this approach demonstrates a significant improvement in LLM inference speed in multilingual contexts compared to existing methods. This study analyzes the effectiveness of Multi-Agent Debate (MAD) systems, where multiple LLM agents collaboratively solve problems through discussion. The research conceptualizes MAD as a test-time scaling technique and compares its performance against single agents in tasks like mathematical reasoning and safety. Findings indicate that MAD is more effective for more difficult problems or less capable models, and that agent diversity is crucial for safety-related tasks.

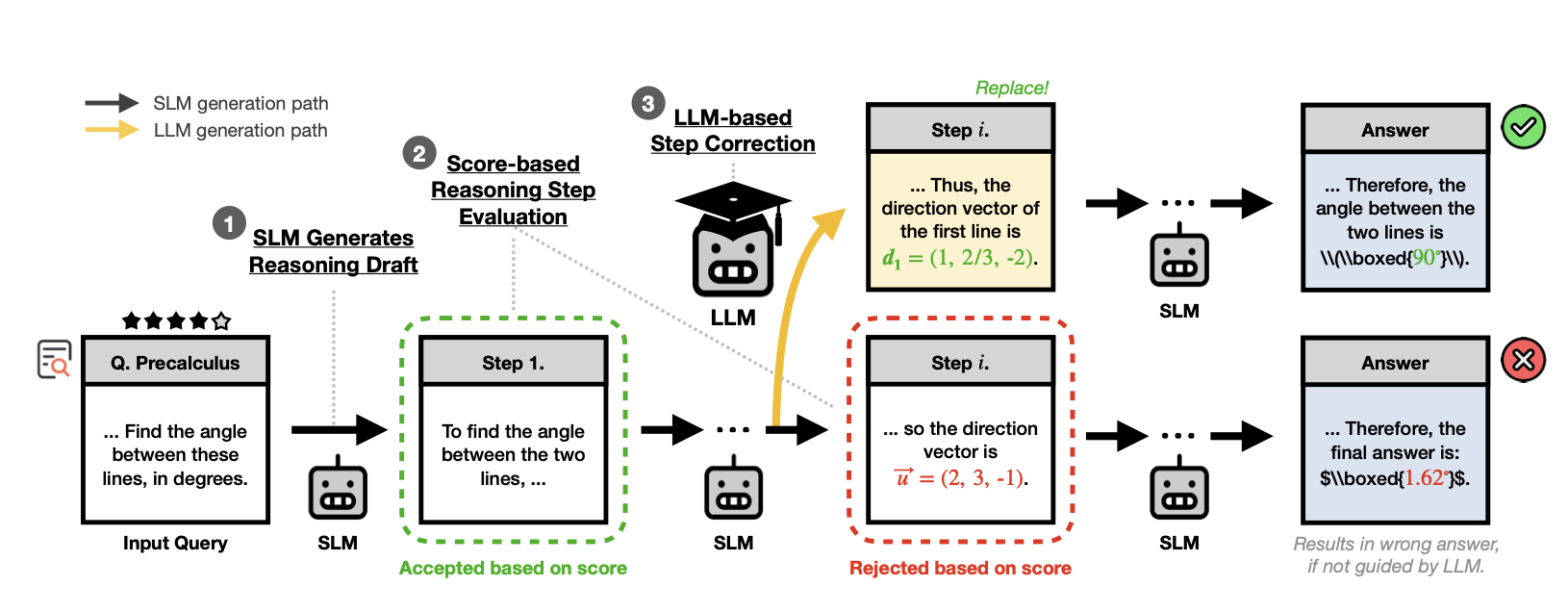

This study analyzes the effectiveness of Multi-Agent Debate (MAD) systems, where multiple LLM agents collaboratively solve problems through discussion. The research conceptualizes MAD as a test-time scaling technique and compares its performance against single agents in tasks like mathematical reasoning and safety. Findings indicate that MAD is more effective for more difficult problems or less capable models, and that agent diversity is crucial for safety-related tasks. This paper proposes the SMART framework to address the challenge of Small Language Models (SLMs) in multi-step, complex reasoning tasks. An LLM selectively intervenes to assist the SLM’s reasoning process only when necessary. This approach evaluates the confidence of the SLM’s reasoning results, and if the score is low, the LLM provides a corrected reasoning step, significantly boosting SLM performance while minimizing LLM usage.

This paper proposes the SMART framework to address the challenge of Small Language Models (SLMs) in multi-step, complex reasoning tasks. An LLM selectively intervenes to assist the SLM’s reasoning process only when necessary. This approach evaluates the confidence of the SLM’s reasoning results, and if the score is low, the LLM provides a corrected reasoning step, significantly boosting SLM performance while minimizing LLM usage.